Quality Requirements and Standards

The Main Meaning of Milan

After so many presentations, and so many articles, it wouldn't be surprising if readers and laboratorians were confused by the goals of the 2014 Milan Meeting. Here's a quick summary of three top take-aways from the conference.

The Main Meaning of Milan 2014

The Main Meaning of Milan 2014

Sten Westgard

December 2014

1. A Restatement of the Ricos Goals? Reducing specifications, restricting the studies included in the database.

In the past 15 years, most of the laboratory opinion has drifted toward the use of quality specifications derived from biologic variation, which we often affectionately refer to as the "Ricos Goals." There were several presentations noting significant limitations and statistical weaknesses in the database construction, including a paucity of data for most of the analytes, a lack of rigor in the studies included in the database, both of which may lead to inaccurate estimates of allowable imprecision, bias, and total error. While the conference set up working groups to review, correct, amend, and improve the database study selection process, in the interim, laboratories are still encouraged to use the existing database specifications. However, the bloom is off the rose. Now those numbers, once deemed the very best, have to be taken with a grain of salt. It has been well known that some of the database specifications are so stringent that no current method on the market can achieve that level of performance. As the database is modified, it is likely that more analytes will have specifications that are not practically achievable. Which leads us to finding number 2...

2. A Multiplicity of Models

The Milan Draft consensus simplifies the five levels of the Stockholm Hierarchy into just three models of determining specifications. The first model is based on examining the clinical use and outcome. The second model is based on the knowledge about biologic variation. And the third model, a catch-all, is "state of the art". As more and more analyte specifications from the biologic variation database become impractical, those analytes will still need to have quality specifications, but they will have to come from a different model. When analytes have are well-defined roles in clinical use, model 1 can be used to provide a quality specification (example, HbA1c and Troponin I), But for analytes where there are no clear single-test uses or multiple factors are taken into account for clinical care, then only the "State of the Art" model will be available.

3. A Working group on Total Error, "To come up with a proposal for how to use the total error concept or if it should be used at all"

While all the other models in the meeting were presented unchallenged, Total Error was singled out for criticism. Actually, it wasn't Total Error that was directly criticized, but, put more accurately, the calculation of Total Error from the data on biological variation. Dr. Oosterhuis pointed out that laboratories should not have the maximum allowable bias at the same time they have the maximum allowable imprecision - that would be a gross overestimate of allowable error. Thus, if allowable total errors are to be formulated from the biologic variation database, they will have to be calculated in such a way that makes them much smaller. Again, this means that the quality specifications coming out of the database will be smaller, tighter, more demanding, and less practical. Ricos goals may well become mostly unreachable.

This particular criticism of Total Error is being conflated with an older argument against the Total Error concept, which is an objection to the existence, allowance, and/or tolerance of bias not only in the equation, but in the laboratory world. That is, to account for bias in Total Error is to allow it to exist, which is a bad thing. Instead, we need to be more intolerant of bias and extinguish and eliminate it at every step. Another objection is that bias and imprecision are different animals, behave differently, and shouldn't be combined, at least not linearly. More complicated models may empower a laboratory to calculate the allowable existence of bias at the expense of practicality and universality: an allowable bias that cannot be generalized for labs, only calculated for specific labs for their own individual use.

Examining these recomendations - in an alternate context

Let's step back from this argument, and take a side step into a world that we all know about: driving and speed limits.

|

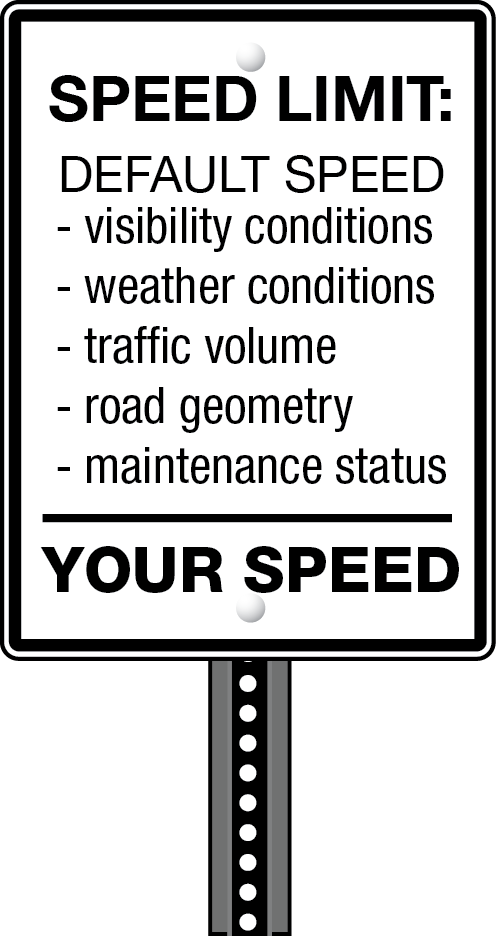

When I drive down a Connecticut highway, on the side of the road is a speed limit sign. It's stated in miles per hour [I know, it's not in international units]. It's just one number. Now if I consult the Connecticut State Driver's Manual, it tells me "You must comply with speed limits. They are based on the design of the road and the types of vehicles that use them. They take into account things you cannot see, such as side roads and driveways where people may pull out suddenly and the amount of traffic on that road." So interestingly, this one number takes into account many different factors, some factors that don't really combine well in an equation, but nevertheless allow the transportation department to set a practical, easy-to-use specification for driving. But the Driver's Manual cautions me, "Remember, speed limits are posted for ideal conditions. If the road is wet or icy, if you cannot see well, or if traffic is heavy, you must slow down. Even if you are driving the posted speed limit, you can get a ticket for traveling too fast for road conditions." This is another one of those George Box moments, where the model and the goal are not pristine and perfect. There are flaws in each estimate and no one number can encompass every condition. So we have to incorporate multiple factors into our budget and accept that this speed limit is a guideline, but not perfect for every scenario. |

|

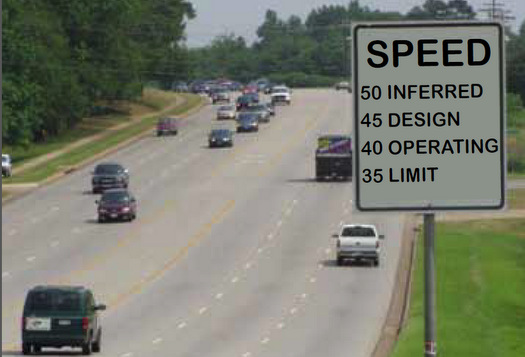

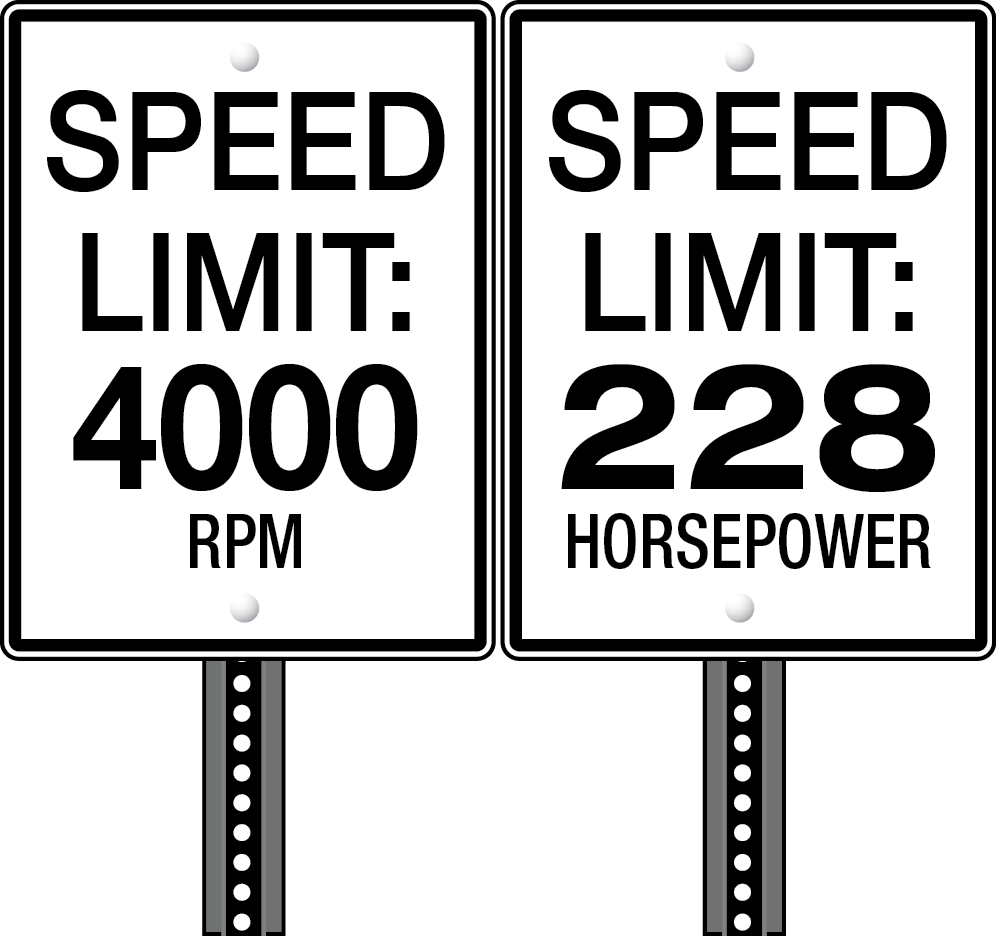

Now we could actually change our specifications entirely. We could, perhaps more precisely, define just what the horsepower and what the revolutions per minute should be for the automobile traveling down the road. From those specifications, we know that we could calculate torque. Torque would then allow us to know how fast the car can accelerate. But even if we know that, we would still need to know the force being applied to wheels, which is affected by the transmission, the differentials and the conditions about the tires that will multiply the engine's torque. We know that "under the hood" a car engine is particularly complicated, so keeping an eye on some of the key variables is a good way to monitor the performance. And if we're hitting the ideal RPM and we have the ideal horsepower, we know we're also probably achieving the appropriate speed. But perhaps we're busy driving and it's not so easy to monitor two different variables at once. |

|

|

We could simplify the specification to a single variable, one that is directly connected most closely to the engine, the pure truth of what the engine is achieving in performance. This could be a number, indeed, that the manufacturer could state to us when we purchase the car. This particular number, unfortunately, leaves out a lot of factors, but it has the simplicity of being one specification. You don't know the ultimate speed directly from RPM, but if you want to, you can always calculate it. The difficulty here is finding the balance between simple without simplistic. If we over-simplify, or drill down too deeply into the details, we risk losing sight of some of the other variables. |

|

|

Finally, there is yet another approach, where you take into account all the variables you can possibly find. In this approach, your engine speed can't be generalized at all, it is always a product of a set of multiple changing conditions that are unique to your situation. Your car isn't like any other car, so why should we generalize a speed limit for all these unique cars and trucks and motorcycles? Shouldn't they all have different speed limits that depend on their driving abilities, their engine capability, their safety record, etc.? But we can all admit it is undoubtedly inconvenient to have each driver calculate their own speed limit every time they get on a road. A general rule of thumb is easier. |

So there are multiple ways we could approach a specification of speed limit, yet we inevitably end up with just one number. Imperfect, yes. But practical. Now I must admit that speed limits are not a perfect analogy for analytical quality goals. The debates about details from allowable imprecision, bias, and total error don't translate directly into the driving world. But it is useful to think about how we set limits or gaols in the real world. As we contemplate the next generation of quality specifications for laboratories, we need to balance the practical with the perfect, and not make the perfect be the enemy of the good.

So there are multiple ways we could approach a specification of speed limit, yet we inevitably end up with just one number. Imperfect, yes. But practical. Now I must admit that speed limits are not a perfect analogy for analytical quality goals. The debates about details from allowable imprecision, bias, and total error don't translate directly into the driving world. But it is useful to think about how we set limits or gaols in the real world. As we contemplate the next generation of quality specifications for laboratories, we need to balance the practical with the perfect, and not make the perfect be the enemy of the good.

We invite you to share your views - take our poll (December 2014) on Quality Specifications. If we can get 200+ responses, we'll share the results in 2015...